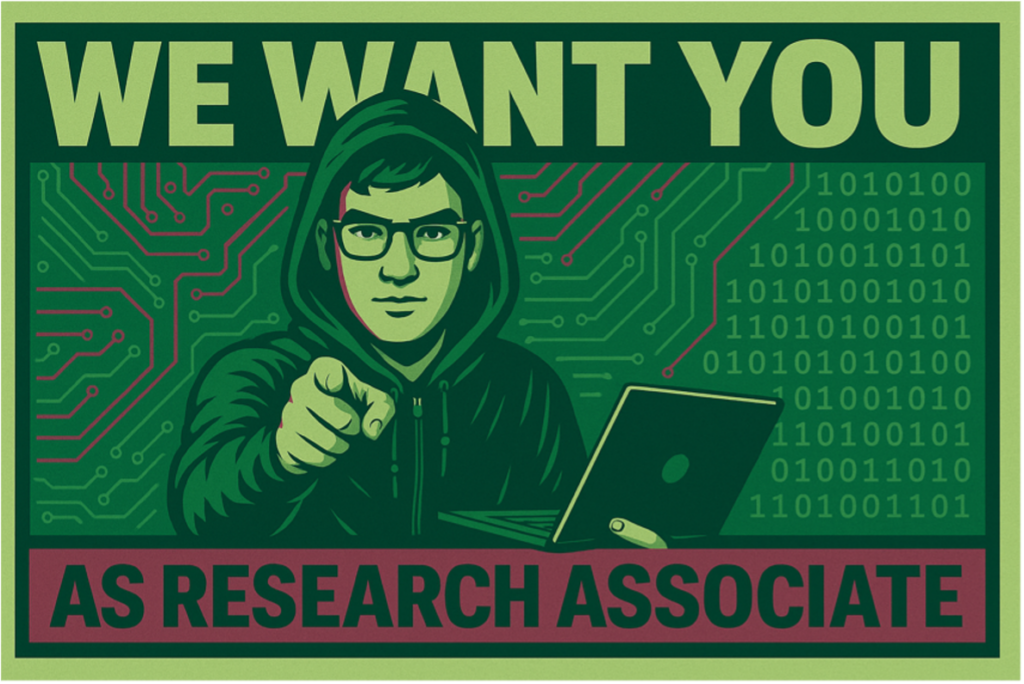

We are currently looking for engaged students interested in voluntary hands-on research experience working with digital data collection and analysis. As a Junior Research Associate you will collaborate as a lab affiliate on any of our ongoing projects, based on your interest. You are also welcome to pitch your own project. It will give you an opportunity to hone your skills by collaborating with experienced researchers in the areas of data science, programming, machine learning, digital ethnography, digital media, and digital democracy.

More info on how to apply in this PDF.

Deadline: 22nd September 2025